Reducing food waste through microbial quality control

In this series of articles, Nikki Hancocks, Innovation and Trends Editor at NutraIngredients, discusses some of the key issues and challenges facing the nutraceutical and food ingredient industry today.

Globally, as much as one-third of the food produced for human consumption is lost or wasted every year. One reason food may be discarded is the presence of microbes that can cause spoilage or illness. This makes proper food safety and chain control in food development and production a key aspect of reducing food waste. Microbiology and Food Safety expert Robyn Eijlander from NIZO discusses how to ensure and control microbial quality when adding novel ingredients or probiotics, or adapting processes.

Robyn Eijlander: Consumers constantly demand ‘new’, ‘better’ or healthier’ foods. To meet this demand, manufacturers may turn to novel ingredients or adapt their production processes. But this can open the door to new microbial contaminants, which may behave in unexpected ways. Microbes that were never a problem before may now survive and emerge in finished products.

Another consumer trend is for ‘cleaner’, more ‘natural’ foods. But reducing traditional preservatives such as salt or sugar, or replacing them with other ingredients or preservation methods, can affect microbial stability, for instance in shelf-stable foods like sauces.

We also see a desire to add more probiotics to foods. These microbes must not only be safe, but also survive product formulation and ingestion. Probiotic bacterial spore formers can be selected for heat resistance, to ensure stability and robustness during food processing. However, when these strains re-enter the food chain down the line, through plant-based ingredients or raw milk, they could negatively affect food stability and shelf life.

Overall, whenever there is a change in ingredients, formulation or processing, you should look into the potential risks. Spoilage or consumer health risks can damage your brand and reputation, and fixing problems after the fact is more costly than identifying and addressing them early on.

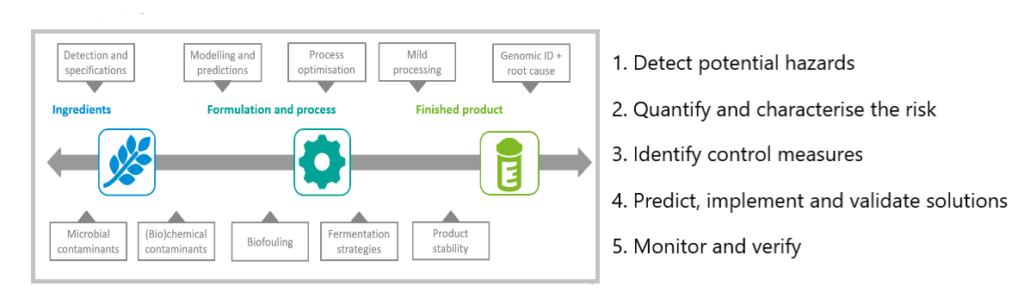

RE: The first step is to detect potential hazards. If there is a microbial contaminant, you should determine its identity and characteristics: pathogen or spore former, heat- or acid-resistant, biofilm former?

Then, you need to quantify and characterise the risk: predicting the microbe’s growth and inactivation, as well as its potential to produce toxins that might persist even after the bacteria themselves are inactivated, for example. And you want to determine which circumstances could support the presence or growth of the bacteria or toxins.

In the third step, you identify ways to control the contamination, and in the fourth step, you predict, implement and validate your solutions. In step 5, you then monitor and verify your control measures.

Schematic representation of a step-wise approach to ensure safe and microbially stable food throughout the production chain.

RE: Not necessarily, but by advancing step by step, you cover all the bases. Steps 1 and 2, for example, don’t require a lot of time or resources, but they provide critical insight into microbial safety and quality at an early stage of product development.

And they will tell you whether steps 3 and 4 are necessary: if you are facing a microbial quality or contamination risk, additional characterisation of the contamination and effective control measures are important. After all, it is better to prevent a problem beforehand than to solve it afterwards! Finally, monitoring the output of your process and verifying the impact of your control measures, is a standard part of food processing, and can either be part of this multi-step process, or a stand-alone action. If you already have your production line set up and you aren’t having any specific issues, monitoring and verifying may be enough. However, identifying, quantifying and characterising potential risks can provide useful insights for optimising this step.

RE: The most appropriate approaches will depend on several factors: the type of information you are looking for, the type of product (solid or liquid, dairy or plant-based, etc.), the type of contaminant (microbial or chemical, pathogen or spoiler, etc.). Selecting the best models and assays requires a multidisciplinary approach that includes knowledge of food manufacturing, formulation and processing, as well as microbiology and even bioinformatics.

Let’s take the example of a yoghurt manufacturer who wants to develop a plant-based version, using novel ingredients and processes. They start by identifying the levels of the most common or dangerous contaminants, such as Listeria, Salmonella or Bacillus cereus, or notorious spoilage microorganisms, such as spore formers of other Bacillus species. Classic plating, combined with 16S rRNA gene sequencing and MALDI TOF mass spectrometry, is cost effective and gives good results regarding the presence and identification of these bacterial species.

But it can’t identify low-abundant microbes (<100 CFU/g) or distinguish between strains of bacteria. We know that some pathogenic species have a high strain-to-strain difference in, for instance, toxin-encoding genes. In-depth molecular methods such as PCR gene amplification, whole genome sequencing or metagenomic sequencing, combined with bioinformatics, can provide detailed information on the microbes present. Furthermore, the resulting data can support high-resolution tracking and tracing of notorious microbial contaminants throughout the production chain.

Next, the risk must be quantified and characterised. Predictive computational models or small-scale lab testing are very effective in determining whether the contaminant grows in the product at certain temperatures and how long it takes to reach critical levels, or if the processes adequately inactivate the microbe. Essentially, does anything need to be changed in the product formulation or thermal processes to improve microbial stability of the finished product? These insights are critical to develop possible control measures and solutions: micro- or lab-scale testing can then identify which is most effective. Challenge tests are also useful for evaluating the product’s shelf life under specific conditions. In step 5, the manufacturer is verifying the impact of the control measures; regular qualitative microbial risk assessments (QMRA) can help ensure microbial quality and safety over time.

NH: What’s in the future for food safety in product development?

RE: Overall, I think there is greater innovation and advancement in food safety and quality when companies consider microbial stability already during research and development. Many pre- and probiotics manufacturers do so, and are also using modern technological advances in molecular technology, sequencing, data management and even artificial intelligence. This holistic approach is not yet as common in general food production, but I hope it will be in the future.

There are some interesting technical innovations that could support this evolution. For instance, handheld devices are making sequencing faster and more accessible for all food manufacturers. You take them on-site, load a sample, and have it sequenced immediately. By combining this technology with decision support system software, informed risk assessments can be completed in hours instead of days or weeks. Futuristic technological applications like these are in reach, and very exciting! They can improve track-and-trace, and open up communication about where contamination is coming from and how to mitigate it.